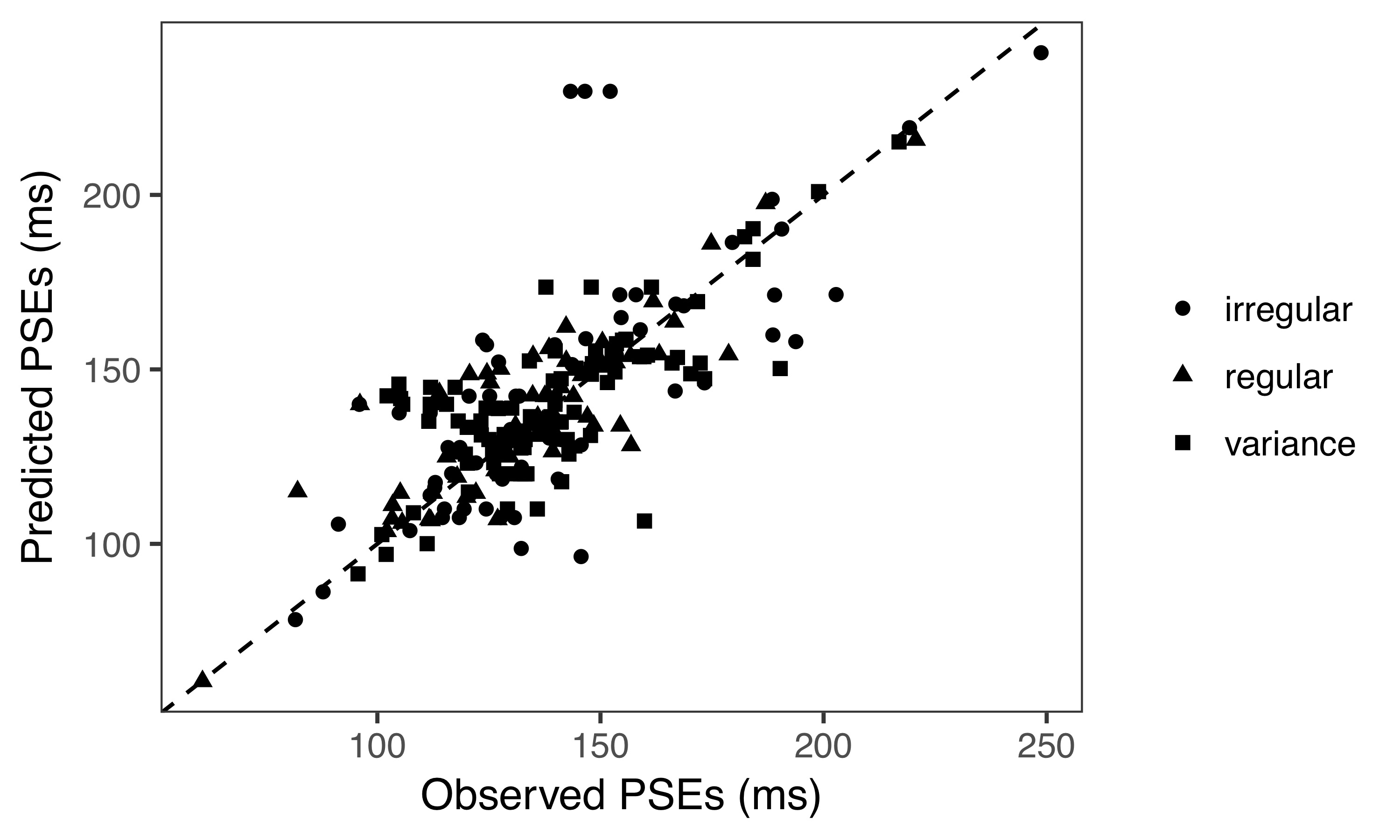

In our multisensory world, we often rely more on auditory information than on visual input for temporal processing. One typical demonstration of this is that the rate of auditory flutter assimilates the rate of concurrent visual flicker. To date, however, this auditory dominance effect has largely been studied using regular auditory rhythms. It thus remains unclear whether irregular rhythms would have a similar impact on visual temporal processing, what information is extracted from the auditory sequence that comes to influence visual timing, and how the auditory and visual temporal rates are integrated together in quantitative terms. We investigated these questions by assessing, and modeling, the influence of a task-irrelevant auditory sequence on the type of “Ternus apparent motion”: group motion versus element motion. The type of motion seen critically depends on the time interval between the two Ternus display frames. We found that an irrelevant auditory sequence preceding the Ternus display modulates the visual interval, making observers perceive either more group motion or more element motion. This biasing effect manifests whether the auditory sequence is regular or irregular, and it is based on a summary statistic extracted from the sequential intervals: their geometric mean. However, the audiovisual interaction depends on the discrepancy between the mean auditory and visual intervals: if it becomes too large, no interaction occurs-which can be quantitatively described by a partial Bayesian integration model. Overall, our findings reveal a cross-modal perceptual averaging principle that may underlie complex audiovisual interactions in many everyday dynamic situations

Together with Lihan Chen, Xiaolin Zhou, Hermann Müller, we recently investigate how temporal averaging influence on visual apparent motion. This work has been recently published in J. Exp Psychol Gen. 2018 Sep 13. doi: 10.1037/xge0000487.

Behavioral data and source code are available at github here.